Max Hodak — Writings

Buggy technology is indistinguishable from malware

September 2020

A while ago, the camera on my phone started acting up: opening it would show an image for a couple seconds but then go black. Simultaneously, when I turned my wireless headphones on there would be a couple seconds of sidetone (hearing through your own microphone) before it cut to the expected silence.

I promptly got a new phone and the old one was sent to forensics.

A little while after that, my stereo receiver started randomly losing its internet connection, causing streaming from Spotify to break. If I reset it to factory settings – and retrained the room calibration, speaker configuration, etc – it would come back, but within a week it would decide to give up on internet connectivity once again.

All of this was both frustrating and unnerving. We’ve become inured to the poor reliability and security of modern electronics – they are simply too complicated – and one thing I’ve had a hard time reconciling is my feeling that we have to do better with the reality that most things do not experience a strong economic pressure to do so. It is extraordinarily expensive to develop avionics software and other similar safety-critical things, and this is a sign to me both that the fundamental toolchain is broken and that most consumers simply don’t care. Even after the OPM hack, the DNC cyberattacks, the Equifax breach, and the Sony Pictures hack still no one has developed any good firewalls I would actually trust with my life. They are all too complex, composed of millions of lines of unproven code written by humans.

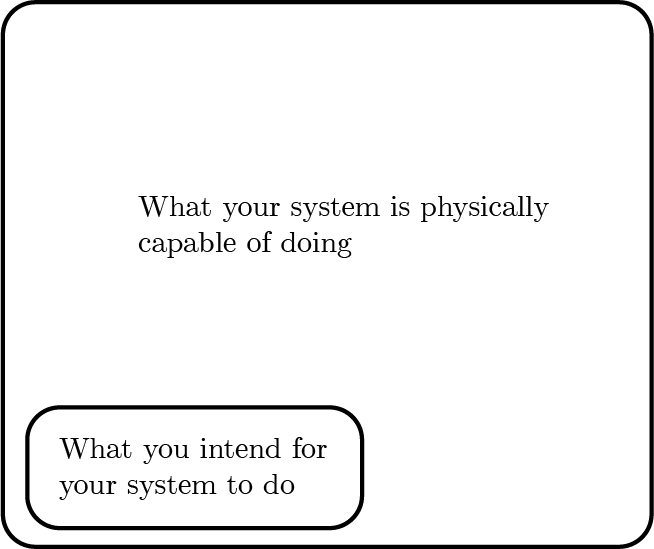

Fundamentally, I see the problem as being the fact that the space of things a system can do being a tiny subset of things the systems is physically capable of doing.

There is no meaningful difference between buggy software and insecure software – security vulnerabilities are just bugs used for a malicious intent. In order to build truly secure systems, we must shrink the space of things the machine is capable of doing to things we want it to do and formally prove this.

It is enormously difficult to formally verify a program of any meaningful size. One common approach – used by some autopilots, among other things – is to write a model DSL and a small code generator that is feasible to formally verify, and then use that to generate the much larger source code of the actual program, which by extension is verified. This is a good idea and I’d love to see things like this used more widely.

Another approach is to use a language with lots of safety features like strong algebraic typing, immutable memory and no dynamic allocation. These are often functional languages and can go a long way towards making it so that if it compiles, it’s probably correct.

Ultimately you also need awareness of the hardware you are running on: no one was talking about speculative execution attacks five years ago, but now they’re just one example of practical attacks stemming from complex hardware performance optimizations.

I’m a big fan of SeL4 and especially its verified RISCV implementation. Yes, RISCV made some microarchitecture trade-offs that are somewhat academically pure at a cost to practicality, but for something like a brain implant you definitely want to prioritize making the intended-behavior and possible-behavior bubbles as close to equal as possible.

The cryptocurrency world also feels these issues acutely: bugs in smart contracts quickly become large financial losses. Long term, I think the investments they are making into formal verification could be one of their largest contributions back to the broader ecosystem.

These problems are all things Neuralink is going to have to invest in over time in order to ensure that the implants are reliable and secure. We will probably need to go way beyond traditional guidelines like MISRA and develop more powerful formal verification tools and code generation for verified hardware, but I think that’s only the tip of the iceberg towards systems that I’d really trust to put in my brain and connect to an increasingly hostile internet. There definitely needs to be much greater investment in these kinds of things, and hopefully an ecosystem develops that explores many possible approaches in parallel.